Defining ‘Wrong’ in AI Detection

When people say AI detectors are ‘wrong,’ it does not mean a minor technical miss. It means a classification error that influences decisions about student work and academic integrity. Studies usually point to two specific failures:

- The system flags human writing as AI-generated

- The system fails to identify text that relies heavily on generative AI

Both outcomes distort the truth, but they affect students and teachers differently. A false positive places suspicion on the student, potentially damaging trust and their academic record. A false negative, on the other hand, allows AI-generated text to pass without the teacher noticing, eventually leading to weakened evaluation standards in the academic context.

Ultimately, accuracy cannot be reduced to a single percentage. Understanding AI detectors being wrong requires looking at how errors show up, how often they occur, and how detection tools interpret different writing styles across real student populations.

Where Detection Results Become Unclear

Many detection tools avoid firm yes or no judgments because most writing does not fit a single category. Labels like “mixed,” “unclear,” or “likely AI” appear when the software encounters language patterns that overlap. Hybrid writing is mostly what creates this overlap. Students often use AI to brainstorm ideas or clean up grammar, then revise the text themselves. The final draft reflects both processes. Detection software evaluates statistical language patterns rather than intent or authorship, which leads to unstable scores that shift across writing styles.

AI Detection Error Rates in Practice

Accuracy claims from AI detection vendors often sound confident, while independent research paints a more complicated picture. Once these tools are tested on real student writing, error rates rise quickly. A 2023 Stanford study found false positive rates for ESL students reaching as high as 97%. In another evaluation, more than half of the TOEFL essays were labeled as AI-generated by leading detectors.

Performance also shifts based on how a text is written. Length matters, for example. Copyleaks reports false positives under 1% for long-form essays, yet accuracy drops on texts under 350 words. Writing style should also be mentioned: simple paraphrasing or light edits can allow AI-generated material to pass unnoticed. Together, these results show that detection accuracy depends heavily on language background, text length, and stylistic choices rather than detector quality alone.

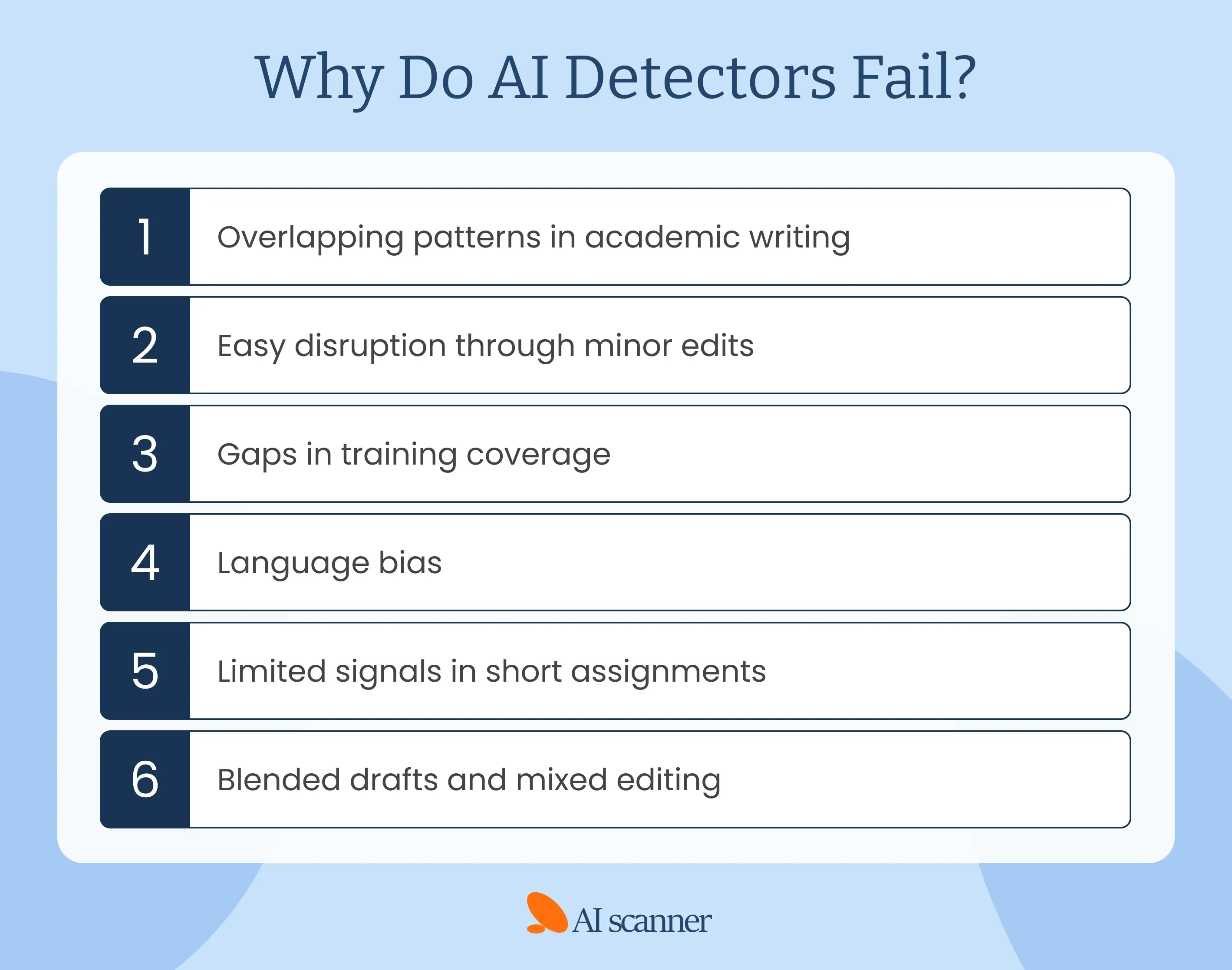

Why Do AI Detectors Fail?

Detection errors do not happen at random. They follow a small set of recurring causes that shape AI detection scores and influence how writing is interpreted. Let’s look closely at the reasons why the detectors sometimes give us unreliable results.

Overlapping Patterns in Academic Writing

Academic writing encourages students to be clear, structured, and consistent. AI models are trained on the same traits. As a result, strong human writing often resembles AI output. Predictable transitions, balanced sentences, and formal tone raise similarity signals. Detection tools do not evaluate effort or authorship but rather compare patterns, and that overlap leads to false positives, especially for disciplined writers who follow academic conventions.

Easy Disruption Through Minor Edits

Most detection systems rely on pattern stability. Minor changes can quickly disrupt those patterns. Paraphrasing a few sentences, varying structure, or adding personal phrasing often lowers detection score. The content itself may remain AI-assisted, yet the altered rhythm reduces detection signals. This limitation explains why false negatives persist even as tools improve.

Gaps in Training Coverage

Detection models depend on training data that lags behind new AI writing behaviors. As generative tools evolve, detectors struggle to keep pace. New phrasing habits, prompt strategies, and editing workflows emerge faster than training updates. That gap weakens reliability over time and increases inconsistency across tools.

Language Bias

Research shows higher error rates for non-native English speakers. Repeated phrasing, simpler syntax, or limited vocabulary often trigger detection flags. These traits reflect language background or learning style, not AI use. Bias skews detection results and raises fairness concerns in academic settings.

Limited Signals in Short Assignments

Short texts provide fewer data points. Under 350 words, detection accuracy drops because statistical signals become unstable. In such cases, there are not enough language patterns to analyze, so small quirks get overinterpreted. That makes results less reliable and confidence scores harder to trust.

Blended Drafts and Mixed Editing

Modern writing often blends AI assistance with human revision. Students brainstorm with AI, adjust grammar, then rewrite sections manually. Detection tools analyze only the final draft. Mixed editing produces overlapping signals that confuse classification and result in uncertain or misleading scores.

How Much “Wrong” Is Acceptable?

On paper, low error rates look harmless. In real classrooms, however, they add up fast. A 1% false-positive rate means that 1 student in every 100 is questioned about work they actually wrote. Large courses turn that into several students per semester. Across a university, it becomes a steady stream of cases, each bringing stress, confusion, and damage to trust between students and teachers.

The problem grows with repeated use. Detection software often runs on multiple assignments across multiple courses. Small mistakes stack over time. What feels acceptable during testing starts to feel reckless in daily academic use. That is why acceptable error thresholds must stay extremely low and never stand alone. Detection tools can point to possible issues, yet final judgment needs context, conversation, and human responsibility.

Consequences of Failing AI Detectors

When AI detectors fail, the fallout rarely stays technical. Errors ripple through classrooms, affect how students are treated, and place institutions in difficult positions. The risks below show what happens when AI writing decisions rely too heavily on imperfect tools.

- Academic Scrutiny Without Cause. Students can be pulled into integrity reviews even after submitting original work. These situations create anxiety, force explanations, and may leave lasting marks on academic records.

- Loss of Trust in Assessment. Repeated misclassification shifts how instructors read assignments. Suspicion replaces the assumption of good faith, which strains the student-teacher relationship.

- Unnoticed Misuse. False negatives allow AI writing to pass without question. Over time, that imbalance undermines fairness across a course.

- Uneven Impact on Different Writers. Students with varied language backgrounds or learning styles face higher error rates. That pattern raises serious concerns about bias and equal treatment.

- Reputational and Legal Risk. When flawed detection drives decisions, institutions face appeals, complaints, and potential public scrutiny.

Responsible Use of AI Detectors

AI detectors are most effective when they support judgment rather than replace it. Responsible use means accepting their limits, understanding how results are produced, and applying them carefully within real academic and professional workflows.

Keep Human Review at the Center

Detection results should always be reviewed by a person. Software can identify unusual patterns, but it cannot explain how a text was produced. A person can ask follow-up questions, review drafts, and understand context in ways tools cannot. Human oversight protects against rushed or unfair conclusions.

Read Results as Signals, Not Decisions

AI detection outputs reflect likelihood, not certainty. A score does not prove intent or authorship. Treating results as one signal among many keeps the process grounded. This approach reduces overreaction and leaves room for explanation and evidence.

Use More Than One Tool

Different detectors focus on different signals. Comparing results across tools helps expose inconsistencies and avoids blind trust in a single system. Cross-checking improves balance and reduces dependence on any one model’s assumptions.

Design Assignments That Show Process

Assignments that require drafts, reflections, or in-class components reduce misuse naturally. Clear process expectations also make detection results easier to interpret when questions arise.

Teach the Limits Clearly

Students and instructors should understand error margins and common failure points. Open discussion about limits builds transparency and encourages responsible AI usage rather than secrecy.

Monitor Bias and Performance Over Time

Detection tools change as models evolve. Regular calibration and bias reviews help identify uneven performance across language backgrounds, writing styles, and student groups.

Final Words

AI detectors are widely used, yet their accuracy varies more than most claims suggest. Research shows consistent errors tied to text length, writing style, and language background. False positives carry real academic consequences, while false negatives allow misuse to pass unnoticed. These limits make context and human judgment essential.

If you're looking for detection software for your writing, you can rely on AI Scanner, but still make sure to interpret results carefully and make informed decisions.

Avoid Accidental Cheating

Run your writing through AI Scanner so you can be sure you're turning in authentic papers.

FAQ

Sources

- AI Detectors Don’t Work. Here’s What to Do Instead. (2024). MIT Sloan Teaching & Learning Technologies. https://mitsloanedtech.mit.edu/ai/teach/ai-detectors-dont-work/

- Detecting AI May Be Impossible. That’s a Big Problem For Teachers. (n.d.). NSF Institute for Trustworthy AI in Law & Society (TRAILS). https://www.trails.umd.edu/news/detecting-ai-may-be-impossible-thats-a-big-problem-for-teachers

- Pindell, N. (2024). The Challenge of AI Checkers | Center for Transformative Teaching | Nebraska. https://teaching.unl.edu/ai-exchange/challenge-ai-checkers/